Nobody's asking why Arnold Schwarzenegger has a newsletter.

They're too busy reading it.

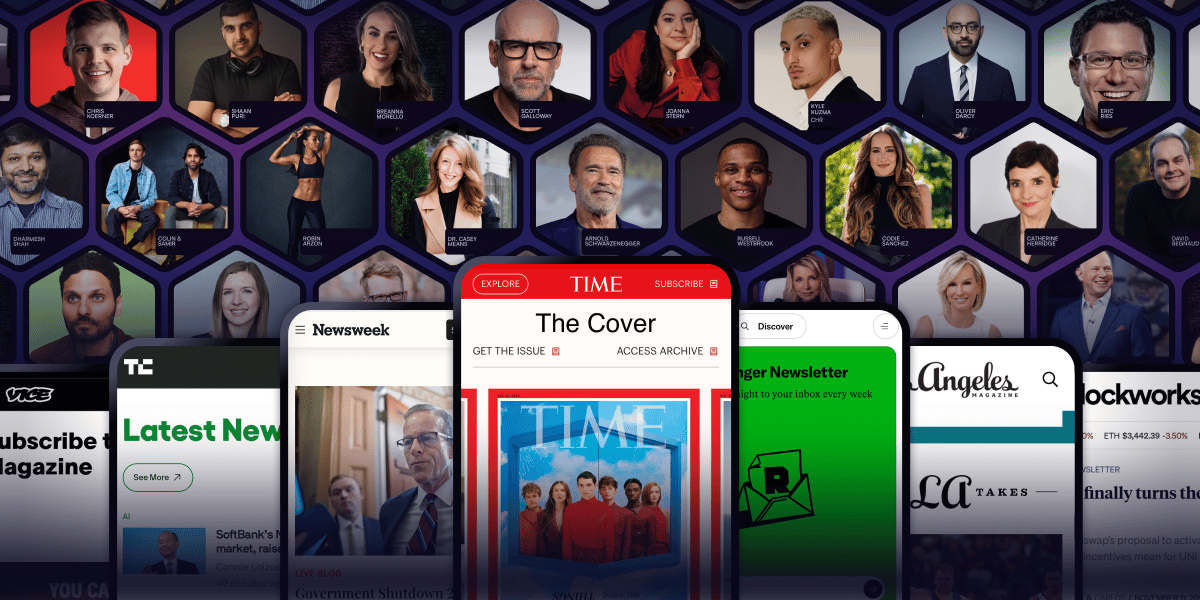

Arnold Schwarzenegger. Codie Sanchez. Scott Galloway. Colin & Samir. Shaan Puri. Jay Shetty. They all figured out the same thing: owned audiences compound, rented ones disappear. beehiiv is where they built theirs.

30% off your first 3 months with code PLATFORM30. Start building today.

| Zymbos Intelligence · Wednesday 6 May 2026 | ||

|

||

|

|

This week the AI stack moved on multiple fronts at once. The US government formalised pre-deployment safety testing for frontier models. Anthropic committed $200bn to Google Cloud. The NHS restricted its open-source code over AI security fears. Microsoft moved AI agent governance from pilot to production. And Coinbase cut 14% of its workforce, citing AI efficiency directly. The infrastructure question is no longer theoretical.

|

|

|

GOVERNANCE · POLICY

US government to safety-test new frontier AI models before release

The US Commerce Department has signed new agreements with Google, Microsoft and xAI to evaluate their frontier AI models before they are made publicly available. The scheme builds on Biden-era pacts already in place with OpenAI and Anthropic, and extends pre-deployment testing to cover cybersecurity, biosecurity and disinformation risks. The evaluations focus on the most capable general-purpose models regardless of intended use, signalling a shift toward systemic oversight of the model layer rather than sector-specific applications. The White House is also reportedly considering a new AI working group to explore mandatory oversight procedures.

McGann's TakeFor UK and European organisations, this sets a de facto global benchmark. If the most capable models face pre-deployment evaluation in the US, vendor roadmaps and procurement assurances will start to reflect that whether or not domestic regulation requires it. The governance layer is now part of the model layer.

Read more →

|

|

INFRASTRUCTURE · COMPUTE

Anthropic commits $200bn to Google Cloud as compute shifts from cost line to strategic contract

Anthropic has committed to spending approximately $200bn (around £158bn) on Google Cloud infrastructure and chips over the next five years, securing access to Google's latest TPU capacity for training and inference. The deal follows a separate commitment of up to $100bn on Amazon Web Services. Combined, Anthropic now has roughly $300bn in committed cloud infrastructure spend before those workloads are even fully defined. The shift is significant: for frontier AI labs, compute is no longer a variable cost managed quarter by quarter. It is a strategic asset locked in through multi-year contracts, reshaping the economics of every organisation that depends on these models downstream.

McGann's TakeThree hundred billion dollars in committed cloud spend from a single AI lab changes how you think about the infrastructure layer. Your AI strategy does not sit on neutral infrastructure. It sits on top of someone else's decade-long capital bet, and the pricing, availability and direction of that infrastructure will reflect those commitments whether or not you have a seat at the table.

Read more →

|

|

UK · SECURITY

NHS England restricts open-source code repositories, citing AI-assisted hacking fears

NHS England has issued guidance requiring staff to make all previously public software repositories private by default, driven by concerns that advanced AI models could ingest the code and identify exploitable vulnerabilities. The UK's AI Security Institute assessed that the specific model cited is currently capable of attacking only weakly defended systems, and security experts have criticised the move on the grounds that open-source code is generally more secure through community scrutiny, not less. The episode illustrates how rapidly AI capability concerns are now influencing infrastructure and operational security decisions inside large public sector organisations, sometimes ahead of the evidence.

McGann's TakeThe speed of this decision matters as much as the decision itself. A major public sector organisation changed its entire software visibility policy in response to a perceived AI threat that its own security institute assessed as limited. That is a governance problem, not a technology problem. It is exactly what happens when AI risk frameworks are built reactively rather than in advance of the pressure.

Read more →

|

|

ENTERPRISE · ORCHESTRATION

Microsoft takes Agent 365 out of preview as shadow AI is declared an enterprise threat

Microsoft has moved Agent 365 to general availability, providing enterprise IT and security teams with a unified control plane to observe, govern and secure AI agents wherever they run, inside Microsoft's own ecosystem, on AWS Bedrock and Google Cloud, on employee endpoints, and across SaaS platforms built by third-party partners. The product's launch framing is notable: Microsoft explicitly names shadow AI, meaning unsanctioned AI tool use by employees, as an enterprise security threat the product is designed to address. The move positions AI agent governance as a core enterprise infrastructure product rather than an optional addition.

McGann's TakeNaming shadow AI as an enterprise threat in a product launch is a significant signal. It means Microsoft is selling to the CISO and the CTO simultaneously. The orchestration layer is no longer just about workflow efficiency; it is about knowing which AI agents are running in your organisation, what they have access to, and what they are doing. That is an infrastructure problem, and it is now a product category.

Read more →

|

|

ENTERPRISE · WORKFORCE

Coinbase cuts 14% of staff, citing AI efficiency as a direct driver of the restructuring

Coinbase is cutting approximately 700 roles, around 14% of its workforce, at a cost of between $50m and $60m (roughly £39m to £47m) in severance. CEO Brian Armstrong cited AI efficiency explicitly as a contributing factor, stating that engineers are now shipping code in days rather than weeks, and that non-technical teams are automating workflows that previously required dedicated headcount. Armstrong framed the shift as a move toward smaller, AI-leveraged teams. It is one of the most direct and senior-level attributions of workforce restructuring to AI productivity gains seen at a major public company this year.

McGann's TakeThis is the AI stack delivering measurable operational output and a CEO saying so publicly. The capability investment argument matters here: teams that built genuine AI fluency are shipping faster and running leaner. Teams that signed the contracts but skipped the capability work are getting the bill without the returns. The infrastructure was the same. The outcomes were not.

Read more →

|

|

|

This Week's Analysis

Strategy, Infrastructure and the AI Stack

Most AI strategy documents are written long before the team has built anything in production. Most production systems are built without much reference to the strategy document. The gap between the two is where most of the wasted spend sits, and it is wider in most organisations than anyone is comfortable admitting. The AI stack is where strategy and production are supposed to meet. A coherent stack has three layers. The model layer covers which models you run, where they run, and how you route between them. The data layer covers what knowledge you ground those models in, where it lives, and how you keep it current. The orchestration layer covers how the pieces communicate with each other, where human review is inserted, and how outputs flow back into systems of record. Where most teams stop

Most teams optimise one of these layers and leave the others underspecified. The model layer gets attention because it is visible and model benchmarks are easy to compare. The data layer gets partial attention when something breaks. The orchestration layer, which is the layer that determines whether AI actually changes how work gets done, is frequently an afterthought. McKinsey's March 2026 research notes that nearly two-thirds of enterprise AI efforts remain stuck in pilots, and attributes the stall to leadership adoption, people readiness, and workflow integration. These are orchestration problems wearing an organisational costume. The question is not which model to run. It is whether the stack beneath it is built to get AI from experiment to operation.

The infrastructure is often in place. The tool contracts are signed. The organisation, the skills, and the operating model sitting alongside the technology are frequently not ready. That is what the numbers are actually measuring. If you are heading into a planning cycle with an AI initiative on the table, the Prompt Pocket this week walks through all three stack layers and surfaces the gaps that tend to emerge six months in rather than before the build starts. Use it before you commit the budget. Infrastructure or capability?

Most AI investment conversations are framed as build versus buy. That is the wrong axis for most organisations. The sharper choice is between infrastructure investment and capability investment. Infrastructure investment is concrete. It appears on a budget line as model contracts, orchestration platforms, integration work, and security review. It earns back over time through reduced unit cost and faster product velocity once deployed. Capability investment is less visible. It lives in the team's skill stack: prompt engineering, evaluation discipline, AI-aware product design, and governance that gets used rather than filed. Boards approve infrastructure more readily. It looks real. Capability looks soft. Six months later, the infrastructure exists, the dashboards exist, the contracts exist, and the team is not using any of it well, because the capability layer was underfunded from the start. The corrective is simple to state and difficult to implement. No infrastructure investment should be approved without a matching capability investment alongside it. Two budget lines, not one. If the capability line cannot be funded, the infrastructure line should not proceed either, because the infrastructure is unlikely to deliver value without it. In practice, this means training time, hiring for new skills, tolerance of slower ramps, and early inefficiency. None of these score well on a standard return on investment template. They earn back asymmetrically. The second AI initiative is dramatically cheaper and lower risk if the first one included the capability investment the stack actually needed. If it did not, the second initiative starts over. The hardest version of this argument is the most practical one: if a board will not fund the capability line, that is a signal about organisational readiness, not about the technology. It is worth surfacing before the infrastructure spend is committed, not six months afterwards. |

|

|

Vercel AI SDK

DEVELOPER TOOLS · MODEL ROUTING · TYPESCRIPT

What it is

Vercel AI SDK is a free, open-source TypeScript toolkit that gives you a single unified interface for working with multiple model providers including OpenAI, Anthropic, Google, and custom or self-hosted endpoints. Rather than wiring each provider's API separately, the SDK handles the abstraction layer, so adding or swapping providers becomes a configuration change rather than a rebuild. Streaming responses and tool calling are handled cleanly across providers, which is where integration effort typically accumulates in manual builds. Where it works well

The optional AI Gateway adds model routing so different workloads go to different models without changing application code. It operates at provider list price with no markup; a $5 monthly credit is included, then billing is usage-based. For TypeScript teams on React or Next.js, where documentation and examples are strongest, the time-to-integration advantage is real. Where to watch

The first-class developer experience leans toward React and Next.js, though the SDK also supports Vue, Svelte, and Node.js. Teams outside the React ecosystem or building primarily backend or agentic architectures will find documentation thinner and need more independent exploration. This is an editorial observation from working with the toolkit, not a limitation in the SDK's capabilities. Ratings

Verdict

A strong choice for TypeScript teams wanting to stop wiring provider APIs manually and start routing intelligently across models. The abstraction is well-designed, the Gateway pricing is transparent and low-risk to start, and the free SDK removes any barrier to evaluation. Teams outside React or building agentic backend systems should factor in additional setup time. Some links are affiliate. Zymbos AI may earn a small commission at no extra cost to you. |

|

|

Audit Your AI Stack Across the Three Layers

STRATEGY · ARCHITECTURE · Works in Claude or ChatGPT

Use this before committing budget to an AI initiative. Designed for technical leads and solution architects, it walks through the model, data, and orchestration layers and surfaces the gaps that tend to appear six months into a project rather than before it starts. Paste the prompt below into Claude or ChatGPT, answer the questions it asks, and you will have a structured gap assessment in around 20 minutes.

I am scoping an AI initiative for [describe the initiative and the organisation type]. Before we commit to build or budget, I want to audit our readiness across the three AI stack layers. For each layer, ask me the questions that would surface critical gaps, then summarise the risks and recommended next steps.

Layer 1, the model layer: which models are we using, where do they run, how do we route between them, and what happens when a model provider changes pricing or terms? Layer 2, the data layer: what knowledge are we grounding the models in, where does that data live, how do we keep it current, and what are our data quality and governance risks? Layer 3, the orchestration layer: how do the pieces communicate with each other, where does human review happen, how do outputs flow back into our systems of record, and what is our failure escalation path? After I have answered, give me a gap assessment per layer, a ranked list of the three biggest risks, and a recommended sequence for addressing them before the build starts. |

|

|

Closing Perspective

The stack is the strategy

There is a version of AI adoption where the model is the strategy. Pick the best one, integrate it, wait for results. Most organisations have discovered this does not work, or they are currently in the process of discovering it. The stack is the strategy. Not because of the technology itself, but because a coherent stack forces you to answer questions that strategy documents tend to skip: what data are you grounding the model in, where is human judgment inserted, and how are outputs actually changing how decisions get made? These are organisational questions, not technical ones. The capability investment argument in the Deep Intelligence this week is, at root, the same point. You cannot buy your way to an organisation that uses AI well. The technology is ready. The harder work is building the team and the operating model alongside it. That is still where most organisations are behind, and it is where the real returns are waiting. John McGann

Founder, Zymbos AI |

|

Zymbos Intelligence

zymbos.ai

You're receiving this because you subscribed at zymbos.ai

© 2026 Zymbos Intelligence · John McGann · London, UK Zymbos Ltd · Company No. 16198848 · Teddington, England |